Google DeepMind’s most recent generation of open models, Gemma 4, was released on April 2, 2026, and is considered the most intelligent open model by Google to date। It provides an unprecedented level of intelligence per parameter, designed for advanced reasoning and agentic workflows।

Key Capabilities and Features

The Gemma 4 models have a wide range of advanced capabilities and are extremely versatile:

Avanced Reasoning: They demonstrate significant improvements in math and instruction-following benchmarks, and are designed for multi-step planning and deep logic

Agentic Processes: Autonomous agents that can interact with a variety of tools and APIs are created with native support for function-calling, structured JSON output, and native system instructions

Multimodal: All models natively process videos and pictures, with various resolutions supported, and performing well on visual tasks like OCR and chart understanding। E2B and E4B models, which are smaller, also have native audio inputs for speech recognition and understanding।

Code Generation: Gemma 4 turns workstations into local-first AI code helpers by supporting high-quality offline code.

Longer Context: Extensive material, such as repositories or lengthy papers, can be processed in a single prompt. This is possible due to Edge models’ 128K context window. Bigger models offer an expanded context up to 256K.

Multilingual Support: Gemma 4’s native training spans more than 140 languages. This helps developers create high-performing apps. The apps are inclusive for a worldwide user base.

Model Sizes and Architectures

Gemma 4 is made for a variety of hardware contexts, including servers and mobile devices, and comes in four adaptable sizes:

Effective 4B (E4B) and Effective 2B (E2B): These models are optimized for on-device utility. They prioritize multimodal capabilities, low-latency processing, and seamless ecosystem integration. The “E” stands for “effective” parameters, which maximize parameter efficiency in on-device deployments by utilizing Per-Layer Embeddings (PLE).

26B Mixture of Experts (MoE): This model is effective for quick inference. It activates a 4B subset of parameters during inference. This activation enables it to operate more quickly than its overall parameter count may imply.

Open Source and Accessibility

Developers have total flexibility and ownership over their data, infrastructure, and models because to Gemma 4’s commercially liberal Apache 2.0 license. This open-source method allows free development in a variety of settings. It also enables safe deployment on-premises and in the cloud. Some laptop GPUs and billions of Android devices are among the user devices that can execute these models locally. Platforms like Google Cloud, Google AI Studio, and Google AI Edge Gallery provide access to them.

Performance and Impact

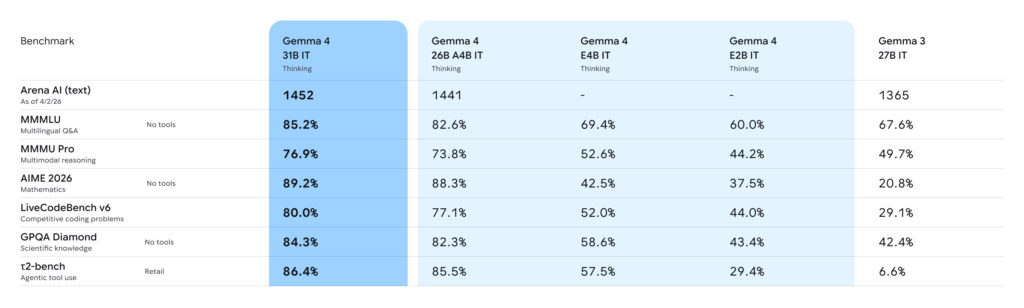

For their sizes, Gemma 4 models provide cutting-edge performance. On the Arena AI text leaderboard, the 31B model is now ranked as the #3 open model worldwide. The 26B model, which outcompetes models 20 times larger, is ranked #6. With much less hardware overhead, frontier-level capabilities may be attained thanks to this “intelligence-per-parameter” approach. Developers have downloaded Gemma models more than 400 million times since the initial generation’s release. This has created a thriving “Gemmaverse” with over 100,000 variations. Building on the same cutting-edge research and technology as Gemini 3, Gemma 4 maintains this momentum.

This information Google according this link– https://blog.google/innovation-and-ai/technology/developers-tools/gemma-4/